I’m upgrading the storage in a offsite backup server to two new disks. The new disks are of 3TB each which pose some challenges when it comes to partitioning. Here is a quick background to this issue.

Why is it an issue to partition disks larger than 2TB?

Historically, data stored on the actual disks have been stored in 512 byte chunks, called a sector. 32 bit addressing of sectors creates the following limit:

512 bytes * 2^32 = 2199023255552 bytes = 2T bytes

And there you have it. Newer disks have transitioned to “4K”/4096 bytes physical sectors which extends this limit to 16TB. But…

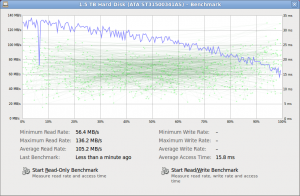

Why is partition alignment crucial to storage performance?

To complicated things further, disks often expose 512 bytes logical sectors to the operating system for legacy support. This might cause tools to believe it is okay to begin and end a partition on any 512 byte sector border, which might not be a 4K byte sector border that is stored on the disk.

Hardware.info has a good article illustrating this.

Wikipedia on 4K / Advanced Format

How do you align partitions in Ubuntu with GNU Parted?

GNU Parted is a tool that supports GUID Partition Table, GPT, setup under Linux. Parted have some parameters to aid in the alignment of partition starts and ends. Let’s launch parted with:

$ sudo parted --align optimal /dev/sdX

Where sdX is the drive we intend to view and/or modify. The –align optimal is the aid in the alignment. In parted we can view the current partition table with the command print:

(parted) print Model: ATA ST3000VX000-1CU1 (scsi) Disk /dev/sdX: 3001GB Sector size (logical/physical): 512B/4096B

As we can see, the drive has 4K physical sectors but presents 512 logical sectors. A tricky part I struggled with for hours was to calculate the partition sizes with the unit set to sectors. In my opinion, parted could be more clear on what sector size it presents to the user. To figure this out I issued the following:

(parted) unit B (parted) print Model: ATA ST3000VX000-1CU1 (scsi) Disk /dev/sdX: 3000592982016B ... (parted) unit s (parted) print Model: ATA ST3000VX000-1CU1 (scsi) Disk /dev/sdX: 5860533168s

Making the calculation, bytes per sectors:

3000592982016B / 5860533168s = 512 byte/sector

So, even though this is a 4K drive, parted is using 512 byte sectors for viewing partition starts, ends and sizes.

Setting up partitions with parted

First, let’s setup a gpt partition table with the following command:

(parted) mklabel gpt

This was the partition layout I wanted to achieve:

| Partition | Size | Usage |

| sdX1 | 8GB | swap |

| sdX2 | 250GB | / |

| sdX3 | 1200GB | raid |

| sdx4 | 1542GB | raid |

Initially, I tried calculating the partition sizes using the sector unit to make sure that each partition border aligned with the physical sectors. Often, parted complained about the alignment with:

Warning: The resulting partition is not properly aligned for best performance.

What helped was to use the unit MB for the starts and ends. Here is the final parted commands:

mkpart primary 1 0% 8000MB mkpart primary 2 8000MB 258000MB mkpart primary 3 258000MB 1458000MB mkpart primary 4 1458000MB 100%

Notes: Using 0% default to the first 1MB border that is correctly aligned. The same goes for 100% which makes sure the last partition aligns with the end of the disk. Here is the resulting partition layout:

(parted) unit s (parted) print Model: ATA ST3000VX000-1CU1 (scsi) Disk /dev/sdX: 5860533168s Sector size (logical/physical): 512B/4096B Partition Table: gpt Number Start End Size File system Name Flags 1 2048s 15624191s 15622144s 1 2 15624192s 503906303s 488282112s 2 3 503906304s 2847655935s 2343749632s 3 raid 4 2847655936s 5860532223s 3012876288s 4 raid (parted) unit compact (parted) print Model: ATA ST3000VX000-1CU1 (scsi) Disk /dev/sdX: 3001GB Sector size (logical/physical): 512B/4096B Partition Table: gpt Number Start End Size File system Name Flags 1 1049kB 8000MB 7999MB 1 2 8000MB 258GB 250GB 2 3 258GB 1458GB 1200GB 3 raid 4 1458GB 3001GB 1543GB 4 raid

To verify that the partitions are aligned, the following command can be executed, with P being the partition number:

(parted) align-check optimal P P aligned

This became a long post. In the future I will try to cover handling alignment between the filesystem layer and the partitions.

Let me know how it goes for you!