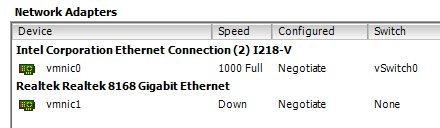

I finally got around trying out the ASRock Z97 Extreme 6 motherboard and how it is supported by ESXi 5.5-u2. There seems to be quite a few issues with getting the Intel I218 to work on some Z97 boards, while the very same driver have been tested to work well on Z87 boards with the Intel I217 controller, for example the ASRock Z87 Extreme 6.

I recently found that a VMWare forum user called GLRoman had managed to compile an updated Intel e1000e driver that is required for this NIC. By the way, I encourage everyone interested in getting drivers to work for ESXi to read through this thread and the threads it’s linking too. Very interesting!

How to add Intel and Realtek drivers to an ESXi 5.5 U2 ISO

While I was at it I also decided to try to add Realtek drivers to the ESXi 5.5-u2 ISO in an attempt to get both NICs on the Z97 ASRock Extreme6 board to work. Please see my previous blog post on adding the updated Intel driver to an ISO. I used the very same method for this test with the addition of the Realtek drivers. In summary, these are the packages, including links to them, that are required to get both NIC operational.

- VMware_bootbank_net-r8168_8.013.00-3vmw.510.0.0.799733.vib – Realtek r8168 and r8111e *

- VMware_bootbank_net-r8169_6.011.00-2vmw.510.0.0.799733.vib – Realtek r8169

- net-e1000e-3.1.0.2-glr-offline_bundle.zip – Intel e1000e

The Intel driver is an offline bundle while the Realtek driver packages are VIB files. The Realtek VIBs are compressed to a zip file and need to be extracted for the EXSi-Customizer-PS script.

How to make ASRock Z97 Extreme 6 boot ESXi when installed on USB

As I have said earlier, I’m fond of installing ESXi to a USB stick to make it separated from the datastores. From what I have understood, ESXi is using GPT by default and I did not manage to get it to boot with UEFI in that way. I found that it is possible to add an option to the installation process which uses MBR instead of GPT.

During boot of the installation media, press SHIFT + O when promted. A prompt with “runweasel” will appear. Press space and add “formatwithmbr“, press enter to continue the installation as normal.

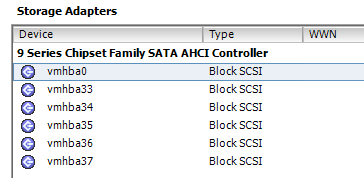

ESXi support for onboard AHCI SATA controller

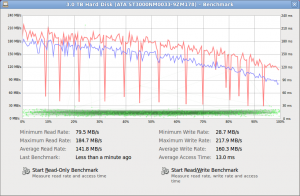

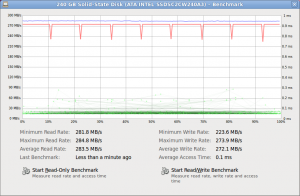

In the previous article I covered the issue of VMWare removing support for some onboard SATA controllers. I did not test whether or not ESXi 5.5 U2 would detect the onboard SATA AHCI controller on this motherboard without the sata-xahci driver package since I decided to include it right away. As can be seen from the screenshot below, the onboard Intel SATA controller is detected and it is possible to connect a HDD/SSD to these ports and use them for datastore. ESXi does not support onboard SATA RAID since it is a kind of software RAID.

VT-d verification on the ASRock Z97 Extreme 6

Unfortunately, at the time of this test I did not have a VT-d capable CPU installed in the system. Therefore, I can not verify that VT-d is working with this board. From what I have read, it is possible to get VT-d to work on the Z97 chipset and other persons have managed to get it working on similar boards. Hopefully, I will have the chance to confirm this at a later point.

Conclusion

It is possible to make both onboard NICs on ASRock Z97 Extreme 6 available to ESXi 5.5 U2 by adding drivers for them to the ESXi ISO image. It is also possible to use the onboard SATA controller to connect drives and use them as datastores.