My previous post covered how to clone an Ubuntu Server installation to a new drive. That method covered cloning between identical drives and identical partition schemes. However, I have grown out of space on one of the file servers which have the following layout:

Device Boot Start End Blocks Id System

/dev/sda1 * 2048 2146435071 1073216512 83 Linux

/dev/sda2 2146435072 2147483647 524288 5 Extended

/dev/sda5 2146437120 2147479551 521216 82 Linux swap

The issue now is that if increase the size of the drive, I can not grow the filesystem since the swap is at the end of the disk. Fine, I figured I could remove the swap, grow the sda1 partition and then add the swap at the end again. I booted up the VM to a Live CD, launched GParted and tried the operation. This failed with the following output:

resize2fs: /dev/sda: The combination of flex_bg and

!resize_inode features is not supported by resize2fs

After some searching and new attempts with a stand alone Gparted Live CD I still got the same results. Therefor, I figured I could try to copy the installation to a completely new partition layout.

Process

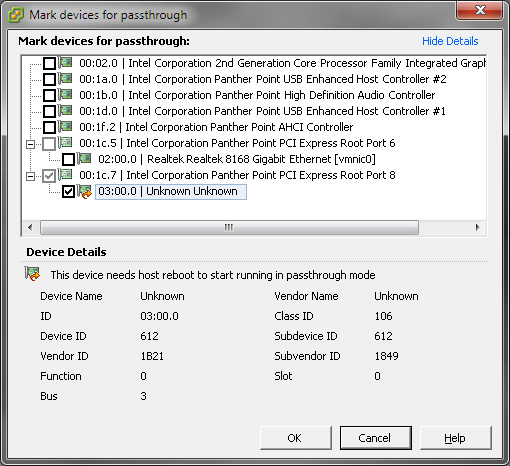

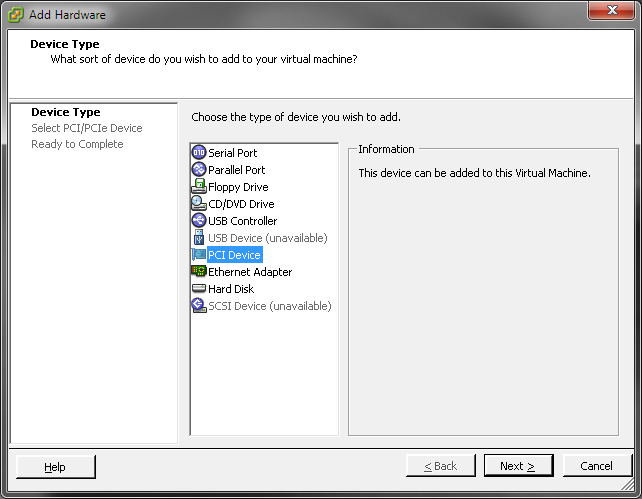

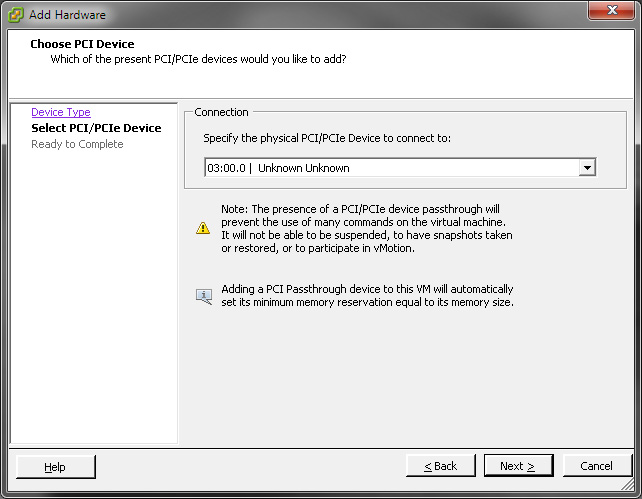

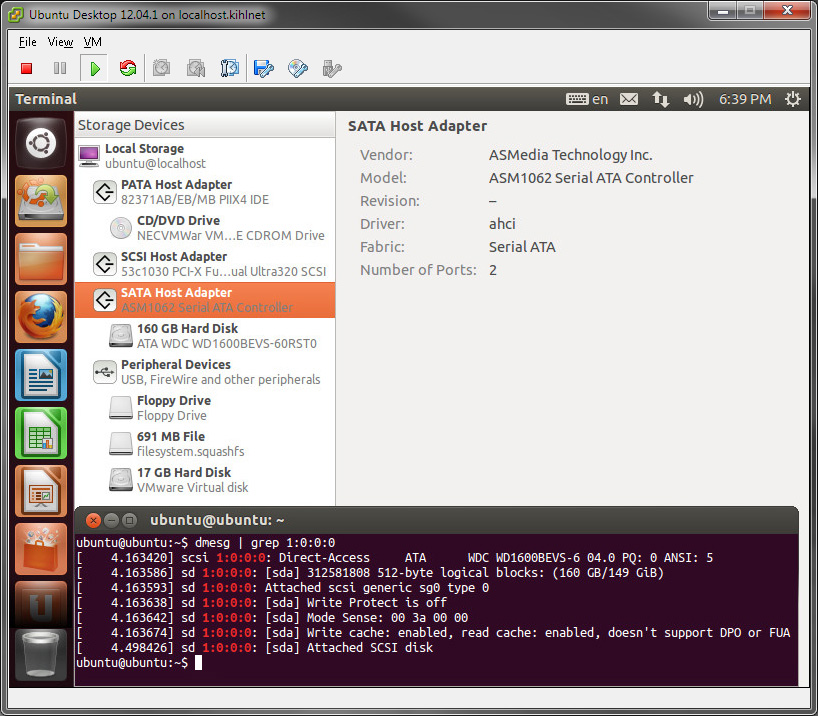

Setup the new filesystem

Add the new (destination) drive and the old (source) drive to the VM and boot it to a Live CD, preferable with the same version as the source OS. Setup the new drive as you like it to be. Here is the layout of my new (sda) and old drive (sdb):

Device Boot Start End Blocks Id System

/dev/sda1 2048 8390655 4194304 82 Linux swap / Solaris

/dev/sda2 8390656 4294965247 2143287296 83 Linux

Device Boot Start End Blocks Id System

/dev/sdb1 * 2048 2145386495 1072692224 83 Linux

/dev/sdb2 2145388542 2147481599 1046529 5 Extended

/dev/sdb5 2145388544 2147481599 1046528 82 Linux swap / Solaris

Copy the installation to the new partition

For this I use the following rsync command:

$ sudo rsync -ahvxP /mnt/old/* /mnt/new/

Time to wait…

Fix fstab on the new drive

Identify the UUIDs of the partition:

$ ll /dev/disk/by-uuid/

5de1...9831 -> ../../sda1

f038...185d -> ../../sda2

Remember, we changed the partition layout so make sure you pick the right ones. In this example, sda1 is swap and sda2 is the ext4 filesystem.

Change the UUID in fstab:

$ sudo nano /mnt/new/etc/fstab

Look for the following lines and change the old UUIDs to the new ones. Once again, the comments in the file is from initial installation, do not get confused of them.

# / was on /dev/sda1 during installation

UUID=f038...158d / ext4 discard,errors=remount-ro 0 1

# swap was on /dev/sda5 during installation

UUID=5de1...5831 none swap sw 0 0

Save (CTRL+O) and exit (CTRL+X) nano.

Setup GRUB on the new drive

This is the same process as in the previous post, only the mount points are slightly different this time:

$ sudo mount --bind /dev /mnt/new/dev

$ sudo mount --bind /dev/pts /mnt/new/dev/pts

$ sudo mount --bind /proc /mnt/new/proc

$ sudo mount --bind /sys /mnt/new/sys

$ sudo chroot /mnt/new

With the help of chroot, the grub tools will operate on the virtual drive rather than the live session. Run the following commands to re-/install the boot loader again.

# grub-install /dev/sda

# grub-install --recheck /dev/sda

# update-grub

Lets exit the chroot and unmount the directories:

# exit

$ sudo umount /mnt/new/dev/pts

$ sudo umount /mnt/new/dev

$ sudo umount /mnt/new/proc

$ sudo umount /mnt/new/sys

$ sudo umount /mnt/new

Cleanup and finalizing

Everything should be done now to boot into the OS with the new drive. Shutdown the VM, remove the old virtual drive from the VM and remove the virtual Live CD. Fire up the VM in a console and verify that it is booting correctly.

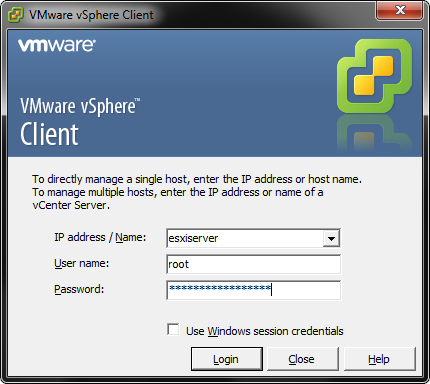

As a friendly reminder – now that the VM has been removed and re-added to the inventory it is removed from the list of automatically started virtual machines. If you use it, head over to the host configuration – Software – Virtual Machine