After having some challenges finding a simple way to use a Maxim (previous Dallas) DS9490R USB to 1-Wire/iButton adapter on Ubuntu 18.04 LTS Server (Bionic Beaver) with python-ow I decided to write down my experiences here.

Orientation

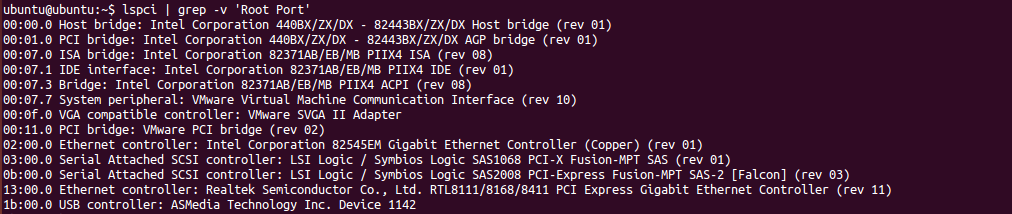

Plug it in! You should see something like:

$ lsusb Bus 002 Device 004: ID 04fa:2490 Dallas Semiconductor DS1490F 2-in-1 Fob, 1-Wire adapter

Run the following command to load the built in kernel module:

$ sudo modprobe ds2490

You will now be able to reach the 1-wire bus master at this location:

$ ls -l /sys/bus/w1/

If you have a working temperature sensor, like the DS18B20, you can run the following command and get its reading

cat /sys/bus/w1/28*/w1_slave 71 01 4b 46 7f ff 0f 10 56 : crc=56 YES 71 01 4b 46 7f ff 0f 10 56 t=22125

I would like to use Python to handle the temperature in order to make good use of the temperature and it’s quite possible to just read this value from the file system. However, I would like to use the python-ow package instead.

python-ow

Start off with installing the python-ow package with:

$ sudo apt-get install python-ow

This will also install the owfs-common package. Launch a python terminal to verify the installation:

$ python

Python 2.7.15rc1 (default, Apr 15 2018, 21:51:34)

[GCC 7.3.0] on linux2

Type "help", "copyright", "credits" or "license" for more information.

>>> import ow

>>> ow.init('u')

Could not open the USB bus master. Is there a problem with permissions?

Traceback (most recent call last):

File "", line 1, in

File "/usr/lib/python2.7/dist-packages/ow/__init__.py", line 224, in init

raise exNoController

ow.exNoController

import ow should work but you are most likely going to run into the permission issue, as can be seen here after the ow.init(‘u’) command. It is possible to run python as root with sudo python and it will not throw an error if all works correctly. However, it’s a bad idea to run all scripts with root so we need to take care of this issue.

libusb permissions

A kernel module (ds2490) is loaded by default which is why the first step works fine. However, python-ow and owfs use the libusb library and we need to disable the kernel module for this to work. Edit the kernel module blacklist:

$ sudo nano /etc/modprobe.d/blacklist.conf

and add the following to the end of the file:

# Disable auto loading of Dallas USB-1-wire adapter blacklist ds2490

Next we need to allow a user to use this specific USB device. To do this we create a udev rule:

$ sudo nano /etc/udev/rules.d/99-one-wire.rules

and add the following content:

ATTRS{idVendor}=="04fa", ATTRS{idProduct}=="2490", GROUP="plugdev", MODE="0664"

What this does is giving the user group plugdev access to the USB adapter. idVendor and idProduct points to the USB device as can be seen with the lsusb command shown earlier. Normal users are members of the plugdev group by default – verify with the groups command.

More information on the section can be found here:

https://www.maximintegrated.com/en/app-notes/index.mvp/id/5917

Important: Reboot the OS in order for all changes to take effect.

Verify functionality

Let’s see if it works. You can launch a python shell and enter the snippets shown earlier. Here is a more complete code section to try out:

(Stolen from https://raspberrypi.stackexchange.com/questions/62292/usage-of-python-ow-one-wire-file-system-python-package-for-reading-1-wire-ds18b2 and modified to taste)

#!/usr/bin/python

import ow

ow.init('u')

sensorlist = ow.Sensor('/').sensorList()

for sensor in sensorlist:

print('Device Found')

print('Address: ' + sensor.address)

print('Family: ' + sensor.family)

print('ID: ' + sensor.id)

print('Type: ' + sensor.type)

if sensor.type == 'DS18B20':

print('Temperature: ' + sensor.temperature)

print(' ')